Engineers from the Massachusetts Institute of Technology turned to a game-night staple to see if a robotic arm could learn from its physical interactions with objects.

As it turns out, it could, albeit slowly. The MIT researchers released a study, and time-lapse video, of an industry-standard ABB robotic arm playing Jenga.

Although a robot might seem to be better equipped than a human player for keeping calm while trying to pry tiny wooden blocks from their fragile structure, the process of determining which block to try could open up a whole new world of possibilities for manufacturing.

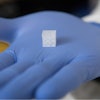

The research team outfitted their robot with a gripper, force sensor and camera, then ran it through about 300 attempts on a Jenga tower.

A computer recorded whether the attempts were successful, and the robot developed a model to predict how other blocks would behave based on its visual and tactile measurements.

By the end, the robot could gently prod a Jenga tower looking for vulnerable pieces — and even play against a few human volunteers.

Researchers, however, are more interested in the ways robots could apply physical lessons to new tasks, such as separating recyclables from trash or assembling components in electronic devices.