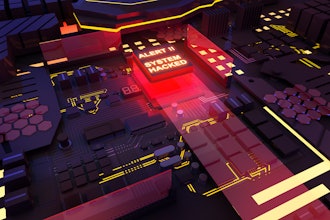

Earlier this month, enemy hackers posted a deepfake of Ukrainian President Volodymyr Zelenskyy asking his citizens to lay down their weapons, once again questioning the harmful implications of advances in AI technology.

This incident brought to light the long-term harm that could be caused when tech-savvy hackers intervene in elections, indeed a topic that will be discussed heavily as we enter midterms.

In Ukraine, the video appeared on social media and briefly on TV. Although Facebook, Youtube, and Twitter pulled it, it was "boosted" on Russian social media. According to NPR, "the video shows a passable lip-sync," but "viewers quickly pointed out that Zelensky's [sic] accent was off and that his head and voice did not appear authentic upon close inspection."

President Zelenskyy did not waste any time responding, which could indicate the growing power of deepfake intervention in the war effort. "We are defending our land, our children, our families. So we don't plan to lay down any arms until our victory," he said.

Although deepfakes were expected to appear in anti- Ukraine propaganda, the speed at which the President responded suggests a real fear for the damage these synthetic videos can bring about.

The Depth of Fake

Deepfakes - a combination of "deep learning" and "fake" - are forged and manipulated videos that can be made with relatively basic knowledge of technology. "The new threat is that we have democratized access to a very powerful technology that allows the average person… to create what used to require a Hollywood studio," said digital forensics expert Hany Farid.

These synthetic videos are used for mischief more often than not: to produce non-consensual intimate imagery ("revenge porn"), to commit fraud, and to spread disinformation.

Are there positives to synthetic media?

Fans of the Star Wars saga were exposed to deepfakes in 2016's Rogue One, with "cameos" by Peter Cushing and Carrie Fisher. Cushing reprised his role as Grand Moff Tarkin from the original 1977 film, even though the actor passed away in 1994. Reaction to this manufactured Cushing was mixed, with criticism ranging from his likeness resembling a video game character to the ethical considerations.

John Knoll, the Chief Creative Officer at Industrial Light and Magic, strongly disagreed with the notion that this deepfake was unethical. "This was done in consultation and cooperation with his estate," explained Knoll. They may have received the appropriate legal permissions, but this digital forgery of the late actor is still up for ethical debate.

Since then, a deepfake Mark Hamill appeared in both The Mandalorian and The Book of Boba Fett. Using the visual effects program Lola, the production team worked with Hamill and a body double to resurrect this legacy character. "They effectively reproduced a de-aged version of Mark for the shots by combining the texture from his face and also [the body double's] younger face," explained Richard Bluff of ILM.

Acting "Younger"

Although this second appearance of Luke Skywalker wasn't met with the same vitriol as his guest spot on The Mandalorian, it does beg the question: is a synthetic forgery all that necessary to the show?

What does it mean for the future of acting if roles will go to digital reincarnations? It could be possible that studios will find deepfakes a more economical casting decision than living, breathing actors.

The fantasy and science fiction genres are not the only ones to use this cutting-edge technology. Martin Scorcese's The Irishman and 2022's Scream "requel" use CGI de-aging in place of casting a new actor in a young role.

An unsophisticated deepfake on a TV show may result in fan condemnation. Still, a deepfake presented as reality can cause a ripple effect in which the viewer is forced to challenge the integrity of future videos. The more deepfakes that appear, the harder it will be to discern what is real from what is manufactured.

Farid warned that "casting doubt on what you see and hear and read is a very powerful weapon in the information war and deepfakes are now playing a role in that."

He and Sophie J. Nightingale recently conducted a study in which they concluded: "synthesis engines have passed through the uncanny valley and are capable of creating faces that are indistinguishable - and more trustworthy - than real faces."

How Does Social Media Handle These Facial Forgeries?

Meta promised to "remove content that is likely to directly contribute to interference with the functioning of political processes and certain highly deceptive manipulated media." While they may flag or take down posts, the social media powerhouse has a reactionary response. Removing inappropriate content does not prevent users from - at least briefly - posting dangerous media.

The video of Zelenskyy was shared and commented upon before it was taken down, and the implications of that deepfake deception could have negative consequences as the war continues.

Farid and Nightingale end their study with a plea to "encourage those developing these technologies to consider whether the associated risks are greater than their benefits."

But, unfortunately, the deepfake of Zelenskyy might not be the last we see in the coming weeks. Social media can't catch everything instantaneously, and the more they appear, the more likely users will misconstrue them as reality.

While advances in AI have enabled the return of Grand Moff Tarkin, Princess Leia, and Luke Skywalker, these same technologies can and will continue to distort perceptions of reality, potentially blurring the lines between real and fake forever.