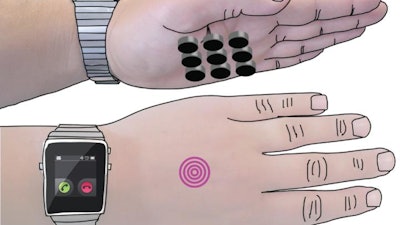

A University of Sussex-led study — funded by the Nokia Research Center and the European Research Council — is the first to find a way for users to feel what they are doing when interacting with displays projected on their hand.

This solves one of the biggest challenges for technology companies who see the human body, particularly the hand, as the ideal display extension for the next generation of smartwatches and other smart devices.

Current ideas rely on vibrations or pins, which both need contact with the palm to work, interrupting the display.

However, this new innovation, called SkinHaptics, sends sensations to the palm from the other side of the hand, leaving the palm free to display the screen.

The device uses ‘time-reversal’ processing to send ultrasound waves through the hand. This technique is effectively like ripples in water but in reverse – the waves become more targeted as they travel through the hand, ending at a precise point on the palm.

It draws on a rapidly growing field of technology called haptics, which is the science of applying touch sensation and control to interaction with computers and technology.

Professor Sriram Subramanian, who leads the research team at the University of Sussex, says that technologies will inevitably need to engage other senses, such as touch, as we enter what designers are calling an ‘eye-free’ age of technology.

He says: “Wearables are already big business and will only get bigger. But as we wear technology more, it gets smaller and we look at it less, and therefore multisensory capabilities become much more important.

“If you imagine you are on your bike and want to change the volume control on your smartwatch, the interaction space on the watch is very small. So companies are looking at how to extend this space to the hand of the user.

“What we offer people is the ability to feel their actions when they are interacting with the hand.”

Professor Sriram Subramanian is a Professor of Informatics at the University of Sussex where he leads the Interact Lab and is a member of the Creative Technology Group.

The findings were presented at the IEEE Haptics Symposium 2016 in Philadelphia, USA, by the study’s co-author Dr Daniel Spelmezan, a research assistant in the Interact Lab. The symposium concludes today (Monday, April 11, 2016).